Military AI startup Helsing raises €450M, plans to protect NATO’s border with Russia

Military AI startup Helsing has raised a whopping €450mn at a reported valuation of €5bn. The German company said the war chest will fund security for NATO’s Eastern Flank. Founded in 2021, Helsing has rapidly grown into one of Europe’s defence tech leaders. It’s now also among the continent’s most valuable AI startups. This rapid rise has coincided with increasing alarm about Russia’s threat to Europe. Since the full-scale invasion of Ukraine began in 2022, defence budgets have soared across the region. Helsing has provided an intriguing target for the funds. The company develops AI software for weapons, vehicles, and military strategy.…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

New hope for European tech? VC funding rises to $29B in first half of 2024

Whisper it, but startup funding is showing signs of a rebound. Venture capital investment in Europe has risen by 12% so far in 2024, according to Dealroom. By June, the financing for startups and scaleups had reached $29.3bn. If the current spending rate continues, this year will become third-most active ever for VC in the continent. The leading industry for investment is energy, which raised $5.6bn during the first half of 2024. This continues a trend from last year, when energy companies topped the funding charts in every quarter. There have been shifts, however, in the sector’s biggest targets. Hydrogen companies…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

SAI Group buys Get Well; aims to use AI for better patient engagement

Investment firm SAI Group this week announced it has acquired Get Well, a 24-year-old company that provides digital patient engagement technology to 1,000 healthcare organizations.

The financial terms of the deal were not disclosed.

SAI said the purchase of Get Well adds to its portfolio of AI healthcare companies. SAI plans to integrate its own generative AI (genAI) platform – GPT 4.0-powered RhythmX AI — “into the patient experience inside and outside the hospital.”

(RhythmX is also the name of SAIGroup’s subsidiary company.)

GetWell’s own digital patient engagement platform — Get Well 360 — already interacts with more than 10 million patients annually, offering them online point-of-care engagement and “guided care,” among other modules. The RhythmX platform offers patients prescriptive actions and recommendations doctors can drill into using a generative AI-enabled natural language interface and AI-native copilots.

“As part of SAIGroup, Get Well’s mission to enable the best patient experience will undergo a rapid transformation with AI to a full precision care platform for hospitals and ambulatory centers,” SAIGroup CEO Romesh Wadhwani said in a statement. “This strategic investment underscores SAIGroup’s commitment to innovative AI-driven solutions in healthcare and highlights our confidence in Get Well as a leader in the digital patient engagement space.”

GetWell’s competitors in the Healthcare Management System arena include EPIC, Cerner, and eClinicalWorks.

Through mergers and acquisitions, SAIGroup has grown into a company with a massive trove of healthcare data from 300 million patients, 4.4 billion annual claims, and information on more than 1.8 million healthcare professionals, according to its own reports.

“Experience, which is often where engagement falls, continues to be the top outcome sought from digital investments,” but many organizations are still falling short of goals set by their executive leadership, according to Faith Adams, a Gartner senior director analyst.

As in most other industries, healthcare providers face a massive shortage of AI-skilled employees and IT pros needed to integrate new automation tools. Healthcare also faces a shortage of clinicians, which automated patient interactions could help address, according to Adams.

A 2024 survey by online education company Pluralsight showed more than 80% of IT pros think they can use AI, but just 12% have the skills and expertise to do so. That same survey showed 97% of firms that have deployed AI have benefited from it, citing increased productivity and efficiency, improved customer service, and reduced human error.

““The biggest part of the story is the shortage of AI tech experience, and patient engagement experience,” Adams said. “One of the bigger opportunities we see here is bringing together SAI’s AI expertise with GetWell’s patient engagement expertise.”

AI platforms can serve as digital tools to bolster patient access to personalized medicine and health literacy — the ability to obtain, read, understand, and use healthcare information to make appropriate health decisions and follow treatment instructions. AI tech can also help patients with their “digital literacy,” allowing them to better find, evaluate, and communicate information through digital media platforms.

In other words, instead of struggling to contact clinicians, online query and answer engines powered by AI can give patients answers based on their own health record information and clinical recommendations.

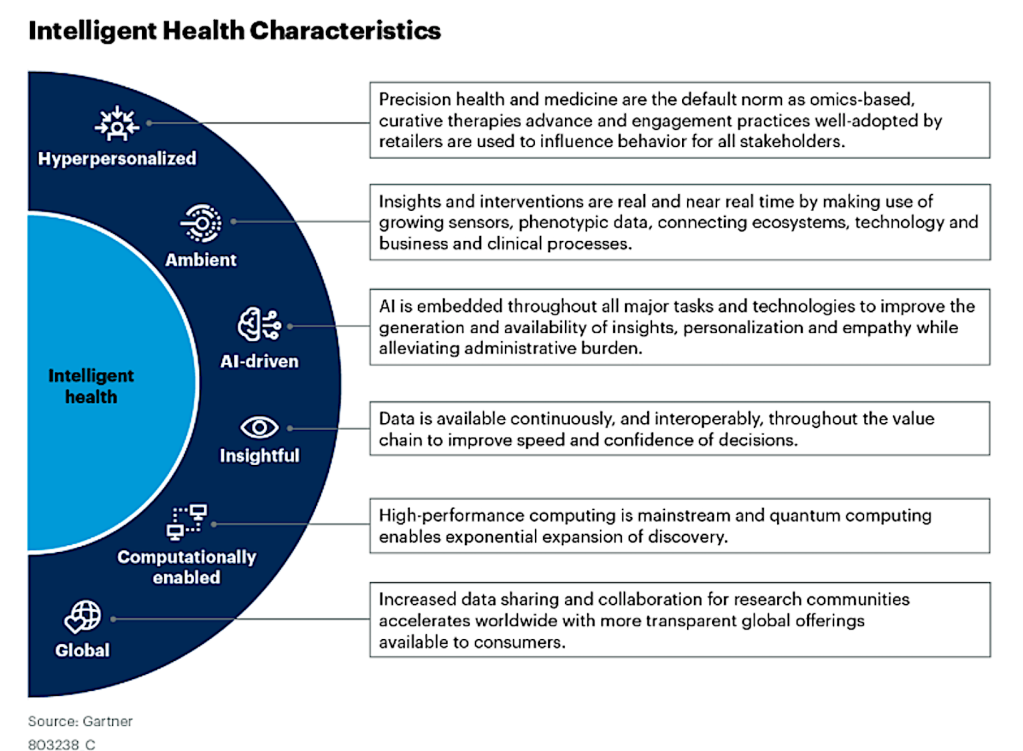

Gartner coined the phrase “Intelligent Health” last year to describe what it sees at the future of digital transformation in healthcare and the life science industries. Intelligent Health refers to the harnessing of the ever-growing volume and variety of patient and clinical data to offer providers and patients a better and more precise healthcare experience.

Gartner Inc.

“Given the complexity of healthcare patient journeys, there is really no one-size-fits-all, and this is where technology can help better support personalization [and] precision using data and insights,” Adams said. “Intelligent health is interoperable by default, relying on continuous data to deliver experience through the unification of digital and in-person care delivery that is precise, equitable and ethical.”

Every patient needs to be approached differently to drive behavioral changes, according to Adams. For example, if a patient needs to lose weight or eat healthier to lower their cholesterol and/or blood pressure levels, AI-based technology can assess their history and make recommendations.

“Patients continue to demand more from their experiences, and they have more choice now than ever. Each patient type needs to be approached differently to drive behavioral change. This [AI tool] simplifies it,” Adams said.

“There are other factors that can influence it, too, but this is always a good starting point to show the no-one-size-fits-all approach will drive behavior change and engagement.”

Source:: Computer World

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

OPPO F27 Pro+ Review: Durability is King

By Hisan Kidwai

After a brief break, the sub-30K segment is heating up again, with brands like Realme and…

The post OPPO F27 Pro+ Review: Durability is King appeared first on Fossbytes.

Source:: Fossbytes

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

How to Download Xcode on Windows in 2024?

By Hisan Kidwai

It’s no secret that Apple restricts coding in the Swift language to its Xcode platform. And…

The post How to Download Xcode on Windows in 2024? appeared first on Fossbytes.

Source:: Fossbytes

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Microsoft 365 Copilot explained: genAI meets Office

Initially called Microsoft 365 Copilot when it launched in November 2023, the renamed “Copilot for Microsoft 365” brings a range of generative AI (genAI) features to office productivity apps such as Word, Outlook, Teams and Excel.

In a blog post announcing the tool, Microsoft CEO Satya Nadella described it as “the next major step in the evolution of how we interact with computing…. With our new copilot for work, we’re giving people more agency and making technology more accessible through the most universal interface — natural language.”

At launch, Microsoft explained that the Copilot “system” consists of three elements: Microsoft 365 apps such as Word, Excel, and Teams, where users interact with the AI assistant; Microsoft Graph, which includes files, documents, and data across the Microsoft 365 environment; and the OpenAI models that process user prompts, such as the ChatGPT-4 large language model and DALL-E 3 model for image generation.

With the tool, Microsoft aims to create a “more usable, functional assistant” for work, J.P. Gownder, vice president and principal analyst at Forrester’s Future of Work team, told Computerworld in fall 2023. “The concept is that you’re the ‘pilot,’ but the Copilot is there to take on tasks that can make life a lot easier.”

The Copilot for M365 is “part of a larger movement of generative AI that will clearly change the way that we do computing,” he said, noting how the technology has already been applied to a variety of job functions — from writing content to creating code — since ChatGPT-3.5 launched in late 2022.

A Forrester report last year predicted that 6.9 million US knowledge workers — around 8% of the total — would be using Copilot for M365 by the end of 2024.

Nadella talked up the effectiveness of the M365 Copilot during a 2023 earnings call, claiming customers had seen productivity gains in line with that of the GitHub Copilot, the AI assistant aimed at developers that launched two years ago. (For reference, GitHub has previously claimed developers were able to complete a single task 55% quicker thanks to the GitHub Copilot, while acknowledging the challenges in measuring productivity.)

Even priced at $30 per user per month, there’s potential to deliver considerable value to businesses, assuming the Copilot delivers on its promise over time. Said Gownder: “The key issue is, ‘Does it actually save that time?’ because it’s hard to measure and we don’t really know for sure. But even conservative time savings estimates are pretty generous.”

The Copilot for M365 is billed as providing employees with access to genAI without the security concerns of consumer genAI tools; Microsoft says its models aren’t trained on customer data, for instance. But deploying the tool represents significant challenges, said Avivah Litan, distinguished vice president analyst at Gartner.

There are two primary business risks, she said: the potential for the Copilot to ‘hallucinate’ and provide inaccurate information to users, and the ability for the Copilot’s language models to access huge swathes of corporate data that’s not locked down properly.

“Information oversharing is one of the biggest issues people are going to face in the next few months, or six months to a year,” said Litan. “That’s where the rubber is going to hit the road on the risk — it’s not so much giving the data to Microsoft or OpenAI or Google, it’s all the exposure internally.”

Copilot for Microsoft 365 features: How do you use it?

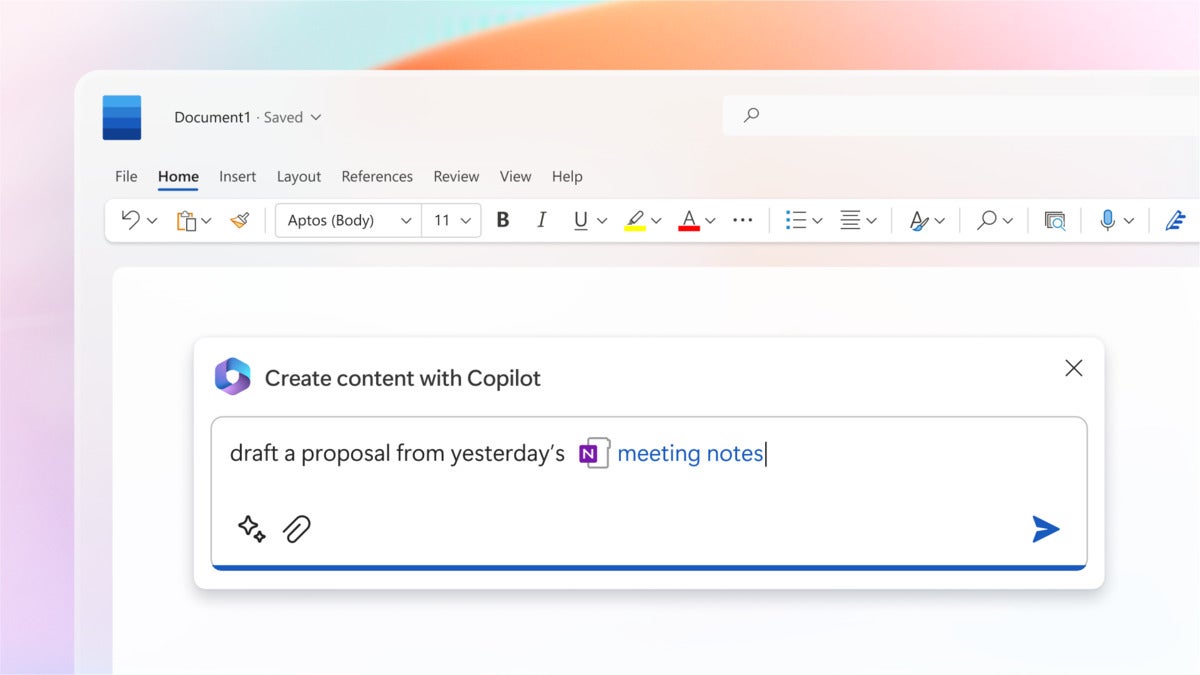

Copilot interactions within apps can take a variety of forms, depending on the application. In many cases, users will interact with it via the chat interface available in a sidebar; Copilot functionality is also built more directly into some apps, such as a pop-up in a Word document or Outlook email, for instance.

Here’s how the Copilot works in some M365 apps.

In a Word doc, it can suggest improvements to existing text or let users create a first draft from scratch. To generate a draft, a user can ask Copilot in natural language to create text based on a prompt, and can upload additional files and sources of information to guide the AI assistant. Once created, the user can edit th document, adjust the style, or ask the Copilot to redo the whole thing. A Copilot sidebar provides space for more interactions with the bot, which also suggests prompts to improve the draft, such as adding images or an FAQ section, or summarize the text.

During a Teams video call, the Copilot provides a recap of what’s been discussed so far, with a brief overview of conversation points in real time. It’s also possible to ask the AI assistant for feedback on people’s views during a call, or what questions remain unresolved. Those unable to attend a particular meeting can send the AI assistant in their place to provide a summary of what they missed and action items they need to follow up on.

Copilot can help a Word user draft a proposal from meeting notes.

In PowerPoint, Copilot can automatically turn a Word document into draft slides that can then be adapted via natural language in the Copilot sidebar. It can also generate suggested speaker notes to go with the slides and add more images.

These are just some examples. Other apps that feature Copilot integration include Excel, Outlook, OneNote, Loop, and Whiteboard.

The other way to interact with Copilot is via a separate chat interface that’s accessible via Teams. Here, the Copilot works as a search tool that surfaces information from a range of sources, including documents, calendars, emails, and chats. For instance, an employee could ask for an update on a project, and get a summary of relevant team communications and documents already created, with links to sources.

Microsoft will extend Copilot’s reach into other apps workers use via “plugins” — essentially third-party app integrations. These will allow the assistant to tap into data held in apps from other software vendors including Atlassian, ServiceNow, and Mural. Fifty such plugins are available, with “thousands” more expected eventually, Microsoft said.

How much does Copilot cost — is it worth $30 per user, per month?

The main Microsoft 365 Copilot is available for enterprise customers on E3, E5, F1 and F3 plans, as well as Office E1, E3, E5, and Apps for Enterprise. It’s also available for smaller business customers on the following plans: Businesses Basic, Business Standard, Business Premium, and Apps for Business.

In each case, the Copilot for Microsoft 365 costs an additional $30 per user each month.

It’s a significant extra expense given that M365 subscriptions start at $6 per user each month for Busines Basic and go up to $55 per user each month for E5. Part of this due to the cost of the high computing costs of the Copilot incurred by Microsoft, said Raúl Castañón, senior research analyst at 451 Research, a part of S&P Global Market Intelligence.

“Microsoft is likely looking to avoid the challenges faced with GitHub Copilot, which was made generally available in mid-2022 for $10/month and, despite surpassing more than 1.5 million users, reportedly remains unprofitable,” said Castañón.

In addition to the core Copilot for M365, job role-specific Copilots are available as paid add-ons. Sales and service Copilots each cost an additional $20 per user each month, while a finance Copilot is currently in preview.

The pricing strategy reflects Microsoft’s confidence in the impact that genAI will have on workforce productivity.

Per Forrester’s calculations in the “Build Your Business Case For Microsoft 365 Copilot” report, an employee earning $120,000 annually — roughly $57 per hour — might save four hours a month on various productivity tasks; those four hours would be worth around $230 a month. In that scenario, it would make sense to invest in Copilot for an employee earning even half that amount, and that’s leaving aside less tangible benefits around employee experience when automating mundane tasks.

There are, as the Forrester points out, other costs to consider beyond licensing — employee training, for instance, as employees learn the new technology. Gartner also predicts that enterprise security spending will increase in the region of 10% to 15% in the next couple of years as a result of efforts to secure genAI tools (not just M365 Copilot).

Businesses are likely to take a cautious approach to deploying the Microsoft tool, at least at first. Microsoft expects revenue related to M365 Copilot to “grow gradually over time,” Microsoft CFO Amy Hood said during the company’s Q1 2024 earnings call. On the same call, Nadella noted that Copilot will be subject to the usual “enterprise cycle times in terms of adoption and ramp.”

Even if the pace of adoption is gradual, there appears to be plenty of interest in deploying it. Forrester expects around a third of M365 customers in the US to invest in Copilot in the first year. Companies that do so will provide licenses to around 40% of employees during this period, the firm estimated.

(Note: while not actually branded as Copilot, Microsoft also makes some genAI features available in Teams Premium. This includes AI-generated notes, AI-generated tasks and live translations in video calls, all of which are powered by ChatGPT AI models. For businesses that are mostly interested in AI assistant features for meetings, this offers a cheaper option than a full Copilot for M365 subscription.)

What are Microsoft’s other Copilots?

Microsoft’s Copilot is embedded in a wide array of products. Beyond the M365 suite, there are Copilots for Dynamics, Power BI, GitHub, and Microsoft’s security suite.

And then there are Copilots aimed primarily at consumer, rather than business, users.

Microsoft launched Copilot Pro in January 2024, a $20 a month subscription that provides individuals with similar functionality to the Copilot for M365. Copilot Pro customers gain access to Copilot chatbot and genAI image creation, as well as AI assistant features in free web versions of apps such as Word, Excel, PowerPoint, and Outlook (though not Teams). Those with Microsoft 365 Personal and family subscriptions can also access the Copilot in desktop apps.

There’s also a free version of the Copilot with access to chatbot functionality only.

The Copilot chat interface is accessible in several ways by both paid and free users. There’s a dedicated web page, a mobile app, and a chatbot built into the Windows operating system, Edge browser, and Bing search engine.

How are early customers using Copilot?

There are two basic ways users will interact with Copilot. It can be accessed directly within a particular app — to create PowerPoint slides, for example, or an email draft — or via a natural language chatbot accessible in Teams, known as Microsoft 365 Chat.

Interactions within apps can take a variety of forms, depending on the application. When Copilot is invoked in a Word document, for example, it can suggest improvements to existing text, or even create a first draft.

To generate a draft, a user can ask Copilot in natural language to create text based on a particular source of information or from a combination of sources. One example: creating a draft proposal based on meeting notes from OneNote and a product road map from another Word doc. Once a draft is created, the user can edit it, adjust the style, or ask the AI tool to redo the whole document. A Copilot sidebar provides space for more interactions with the bot, which also suggests prompts to improve the draft, such as adding images or an FAQ section.

During a Teams video call, a participant can request a recap of what’s been discussed so far, with Copilot providing a brief overview of conversation points in real time via the Copilot sidebar. It’s also possible to ask the AI assistant for feedback on people’s views during the call, or what questions remain unresolved. Those unable to attend a particular meeting can send the AI assistant in their place to provide a summary of what they missed and action items they need to follow up on.

In PowerPoint, Copilot can automatically turn a Word document into draft slides that can then be adapted via natural language in the Copilot sidebar. Copilot can also generate suggested speaker notes to go with the slides and add more images.

The other way to interact with Copilot is via Microsoft 365 Chat, which is accessible as a chatbot with Teams. Here, Microsoft 365 Chat works as a search tool that surfaces information from a range of sources, including documents, calendars, emails, and chats. For instance, an employee could ask for an update on a project, and get a summary of relevant team communications and documents already created, with links to sources.

Microsoft will extend Copilot’s reach into other apps workers use via “plugins” — essentially third-party app integrations. These will allow the assistant to tap into data held in apps from other software vendors including Atlassian, ServiceNow, and Mural. Fifty such plugins are available, with “thousands” more expected eventually, Microsoft said.

How are early customers using Copilot?

Prior to launch, many businesses accessed the Copilot for M365 as part of a paid early access program (EAP); it began with a small number of participants before growing to several hundred customers, including Chevron, Goodyear, and General Motors.

One of those involved in the EAP was marketing firm Dentsu, which began deploying 300 licenses to tech staff and then employees across its business lines globally. The most popular use case so far is summarization of information generated in M365 apps — a Teams call being one example.

“Summarization is definitely the most common use case we see right out of the box, because it’s an easy prompt: you don’t really have to do any prompt engineering…, it’s suggested by Copilot,” Kate Slade, director of emerging technology enablement at Dentsu, said.

Staffers would also access M365 Chat functions to prepare for meetings, for instance, with the ability to quickly pull information from different sources. This could mean finding information from a project several years ago “without having to hunt through a folder maze,” said Slade.

The feedback from workers at Dentsu has been overwhelmingly positive, said Slade, with a waiting list now in place for those who want to use the AI tool.

“It’s reducing the time that they spend on [tasks] and giving them back time to be more creative, more strategic, or just be a human and connect peer to peer in Teams meetings,” she said. “That’s been one of the biggest impacts that we’ve seen…, just helping make time for the higher-level cognitive tasks that people have to do.”

Use cases have varied between different roles. Denstu’s graphic designers would get less value from using Copilot in PowerPoint, for example: “They’re going to create really visually stunning pieces themselves and not really be satisfied with that out-of-the-box capability,” said Slade. “But those same creatives might get a lot of benefits from Copilot in Excel and being able to use natural language to say, ‘Hey, I need to do some analysis on this table,’ or ‘What are key trends from this data?’ or ‘I want to add a column that does this or that.’”

How does Copilot compare with other productivity and collaboration genAI tools?

Most vendors in the productivity and collaboration software market have added genAI to their offerings at this point.

Google, Microsoft’s main competitor in the productivity software arena, launched DuetAI for Workspace in 2023, and rebranded to Gemini Enterprise ($30 per user each month) and Gemini Business ($20 user each month). Google’s AI assistant can summarize Gmail conversations, draft texts, and generate images in Workspace apps such as Docs, Sheets,and Slides.

Slack, the collaboration software firm owned by Salesforce and a rival to Microsoft Teams, launched its Slack AI feature in February. Other firms that compete with elements of the Microsoft 365 portfolio, such as Zoom, Box, Coda, and Cisco, have also touted genAI plans.

Meanwhile, Apple announced that it will build generative AI features into its range of productivity tools.

Then there are the AI specific tools, such as OpenAI’s ChatGPT, as well as Claude, Perplexity AI, Jasper AI and others, that provide also provide text generation and document summarization features.

Copilot has some advantages over rivals. One is Microsoft’s dominant position in the productivity and collaboration software market, said Castañón. “The key advantage the Microsoft 365 Copilot will have is that — like other previous initiatives such as Teams — it has a ‘ready-made’ opportunity with Microsoft’s collaboration and productivity portfolio and its extensive global footprint,” he said.

Microsoft’s close partnership with OpenAI (Microsoft has invested billions of dollars in the company on several occasions since 2019 and has a large non-controlling share of the business), likely helped it build generative AI across its applications at faster rate than rivals.

“Its investment in OpenAI has already had an impact, allowing it to accelerate the use of generative AI/LLMs in its products, jumping ahead of Google Cloud and other competitors,” said Castañón.

What are the genAI risks for businesses? ‘Hallucinations’ and data protection

Along with the potential benefits of genAI tools like the Copilot for M365, businesses should consider risks. These include the hallucinations large language models (LLMs) are prone to, where incorrect information is provided to employees.

“Copilot is generative AI — it definitely can hallucinate,” said Slade, citing the example of one employee who asked the Copilot to provide a summary of pro bono work completed that month to add to their timecard and send to their manager. A detailed two-page summary document was created without issue; however, the address of all meetings was given as “123 Main Street, City, USA” — an error that’s easily noticed, but an indication of the care required by users when relying on Copilot.

The occurrence of hallucinations can be reduced by improving prompts, but Dentsu staff have been advised to treat outputs from the genAI assistant with caution. “The more context you can give it generally, the closer you’re going to get to a final output,” said Slade. “But it’s never going to replace the need for human review and fact check.

“As much as you can, level-set expectations and communicate to your first users that this is still an evolving technology. It’s a first draft, it’s not a final draft — it’s going to hallucinate and mess up sometimes.”

Tools that filter Copilot outputs are emerging that could help here, said Litan, but this is likely to remain a key challenge for businesses for the forseeable future.

Another risk relates to one of the major strengths of the Copilot: its ability to sift through files and data across a company’s M365 environment using natural language inputs.

While Copilot is only able to access files according to permissions granted to individual employees, the reality is that businesses often fail to adequately label sensitive documents. This means individual employees might suddenly realize they are able to ask Copilot to provide details on payroll or customer information if it hasn’t been locked down with the right permissions.

A 2022 report by data security firm Varonis claimed that one in 10 files hosted in SaaS environments is accessible by all staff; an earlier 2019 report put that figure — including cloud and on-prem files and folders — at 22%. In many cases, this can mean organization-wide permissions are granted to thousands of sensitive files, Varonis said.

In many cases, the most important data, around payroll, for instance, will have strict permissions in place. A greater challenge lies in securing unstructured data, with sensitive information finding its way into a wide range of documents created by individual employees — a store manager planning payroll in an Excel spreadsheet before updating a central system, for example. This is similar to a situation that the CTO of an unnamed US restaurant chain encountered during the EAP, said Litan.

“There’s a lot of personal data that’s kept on spreadsheets belonging to individual managers,” said Litan. “There’s also a lot of intellectual property that’s kept on Word documents in SharePoint or Teams or OneDrive.”

“You don’t realize how much you have access to in the average company,” said Matt Radolec, vice president for incident response and cloud operations at Varonis. “An assumption you could have is that people generally lock this stuff down: they do not. Things are generally open.”

Another consideration is that employees often end up storing files relating to their personal lives on work laptops.

“Employees use their desktops for personal work, too — most of them don’t have separate laptops,” said Litan. “So you’re going to have to give employees time to get rid of all their personal data. And sometimes you can’t, they can’t just take it off the system that easily because they’re locked down — you can’t put USB drives in [to corporate devices, in some cases].

“So it’s just a lot of processes companies have to go through. I’m on calls with clients every day on the risk. This one really hits them.”

Getting data governance in order is a process that could take businesses more than a year to get sorted, said Litan. “There are no shortcuts. You’ve got to go through the entire organization and set up the permissions properly,” she said.

In Radolec’s view, very few M365 customers have yet adequately addressed the risks around data access within their organization. “I think a lot of them are just planning to do the blocking and tackling after they get started,” he said. “We’ll see to what degree of effectiveness that is [after launch]. We’re right around the corner from seeing how well people will fare with it.”

The Copilot for M365 pros and cons

Pros:

- Boost to productivity. GenAI features can save time for users by automating certain tasks.

- Breadth of features. Copilot for M365 is built into the productivity apps that many workers use on a daily basis, including Word, Excel, Outlook and Teams.

- Responses generated by the Copilot for M365 are anchored in the emails, files, calendars, meetings, contacts, and other information contained in Microsoft 365. This means the Copilot for M365 can arguably offer greater insights into work data than any other generative AI tool.

- Enterprise-grade privacy and security controls. Unlike consumer AI assistants, Microsoft promises that customer data won’t be used to train Copilot models. It also offers tools to help manage access to data in M365 apps.

Cons:

- Price. GenAI isn’t cheap and M365 customers are required to pay a significant additional fee each month for access to Copilot features. An individual employee might not need access to Copilot in more than a couple ofM365 apps.

- Need for employee training. Getting the most out of genAI tools will require guidance around effective prompts, particularly for employees that are unfamiliar with the technology — an additional cost businesses must factor in.

- Accuracy and hallucinations. LLMs are notoriously unreliable, confidently offering answers that are incorrect. This is a particular concern when it comes to business data, and users must be on the lookout for errors in Copilot outputs.

- Data protection risks. The ability for Copilot for M365 to access a wide range of corporate data means businesses must be careful to ensure that sensitive documents are not exposed.

- The Copilot functionality in Excel is limited at this stage.

More on Copilot for Microsoft 365:

Source:: Computer World

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

PhotonDelta’s new Silicon Valley hub to merge Dutch and US photonic chip expertise

PhotonDelta, a photonic chip accelerator in the Netherlands, has opened an office in Silicon Valley — marking a milestone moment for the Dutch semiconductor sector. The move is part of PhotonDelta’s goal to create a unified photonic chip industry that leverages the capabilities of both European and US-based companies. Founded in 2018, the organisation has built an integrated photonics ecosystem in the Netherlands, which boasts an end-to-end value chain, from design and manufacturing to packaging and testing. In 2022, it received a €1.1bn investment from the Dutch government to boost the country’s position in the field. Photonic chips use light…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Ariane 6 has lift off! Historic rocket launches Europe back into space

Finally, Europe has regained independent access to space. The continent reestablished a sovereign launch capacity on Tuesday with the maiden flight of the Ariane 6 rocket. Built to take satellites into orbit, Ariane 6 lifted off from the European Spaceport in French Guiana at 16:00 local time (21:00 CEST). The rocket is now soaring into the cosmos with a payload of satellites and experiments. Once the cargo is dropped off, Ariane 6’s upper stage will burn up to reduce space trash. The demonstration mission aims to prove the launcher’s capabilities. It also close a dismal chapter for the European Space Agency (ESA).…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Meta and Vodafone collaborate to boost short-form video quality across Europe

In a collab between big tech and telcos, Meta and Vodafone today announced the roll-out of network optimisation across 11 different markets in Europe to free up capacity and boost video quality. It is no secret that video content has exploded on the internet over the past couple of years. And it’s not just your ice-bathing guys or gals pushing the latest longevity schtick on YouTube, or Lady Gaga doing the “Wednesday dance.” Everyone from LinkedIn “thought leaders” to grandmothers dishing out life and baking advice are posting TikToks, reels, stories, and all the other kinds of short video snippets…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

OpenAI models still available in China via Azure cloud despite company ban

OpenAI models are still accessible through Microsoft Azure’s cloud in China despite the fact that the company has banned the use of these models in the region. The backdoor access to the models is part of a changing dynamic in China’s tech space, where emerging players hope to fill the gap the ban is poised to leave in the market, even as US-based tech firms look to circumvent growing trade restrictions.

Azure China operates as a joint venture with local company 21Vianet in China, which offers OpenAI’s service, according to an exclusive report by The Information on Monday. Three Azure customers in China also confirmed to the publication that they still have access to OpenAI’s models; two claimed they’ve used OpenAI’s API to train AI models sold to Chinese customers.

Microsoft confirmed to Computerworld Tuesday that Azure regions operated by 21Vianet are physically separated instances from Microsoft’s global cloud, though they are built on the same cloud technical base as its global peers. The company did not confirm or deny that access to OpenAI is still possible through Azure in China.

Two weeks ago OpenAI sent letters to Chinese users warning it plans to cut off its AI development software and tools starting in July, according to multiple reports, incuding oneby Time magazine. This caused a rush by other China-based AI companies to incentivize developers using OpenAI to switch to their platform.

“Already we see Baidu, Tencent, Alibaba and many other Chinese companies stepping in with heavy discounts in an attempt to pick up current OpenAI users in China,” said Brad Shimmin, chief analyst, AI and data analytics, at Omdia.

Baidu, for example, has promised free AI model fine-tuning and expert guidance on its flagship Ernie model, along with 50 million free tokens developers can use to query the bot, according to the Time report. Alibaba and Tencent posted ads encouraging the move, while Chinese technology pioneer Kai-fu Lee’s 01.AI is promoting heavy discounts to use its service, Time reported.

Meanwhile, at the World AI Conference in Shanghai last week, another Chinese AI company, SenseTime, unveiled its latest model — SenseNova 5.5; like Baidu, it offered companies 50 million free tokens to use the model, according to a separate report by The Guardian. SenseNova also promised to deploy staff for free to help new clients migrate from OpenAI to SenseTime’s AI tools.

Getting around trade restrictions

Microsoft invested billions of dollars in OpenAI in January 2023 and is closely aligned with the ChatGPT maker, integrating its technology through its own AI chatbot called Copilot, which is hosted on Azure and an integral part of its own products and services.

Microsoft did not provide a motive for allowing access to OpenAI in China through Azure. Shimmin, however, noted that China is a “sizeable market opportunity” for “mega-brands” like Microsoft, Google, Meta and Apple, “one worth the additional cost of establishing sometimes complex operating policies in order to do business in-country.”

For many companies operating within China’s borders, restrictions on technology and other products from US vendors are nothing new given the long-term battle between the two nations over tech supremacy. “Many companies have and are actively circumventing in-house blocks from the government using VPN services,” Shimmin said.

The US most recently imposed a series of tight restrictions on the export of microprocessors to China. However, US President Joseph R. Biden Jr. made it clear last year that the tech trade war with China extends to other technology, including AI.

A competitive advantage

In addition to OpenAI, a number of US-based AI services aren’t currently operating in China, including Anthropic, which does not support mainland China or Hong Kong, and Amazon Bedrock from AWS, which is only available in the region in Singapore, Japan, and Australia, Shimmin said.

Microsoft’s circumvention of the OpenAI ban “underscores its commitment to the region and to its customers,” Shimmin said.

It also could help the company maintain its competitive edge and market share, not only in AI but also in China’s lucrative cloud services market, even while keeping its relationship with OpenAI on track, said Stephen Kowski, Field CTO at SlashNext Email Security+.

“By offering continued access to OpenAI models, Microsoft can attract and retain enterprise customers seeking advanced AI capabilities,” he said. “This approach allows Microsoft to balance its partnership with OpenAI and its business interests in China.”

When given the choice to access OpenAI GPT models directly from OpenAI or via Microsoft OpenAI Azure Service, most enterprise customers would likely opt for Microsoft, Shimmin noted, “because they can access GPT without worrying about issues like data leakage or model privacy/security.”

Source:: Computer World

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Microsoft mandates Chinese staff to use iPhones, not Android

Microsoft has ordered its staff in China to use iPhones for their work starting in September.

The decision effectively bars the use of Android smartphones by the tech giant’s Chinese staffers, Bloomberg reports.

The decision has more to do with standardising use of the Microsoft Authenticator and Identity Pass app among all personnel rather than security concerns about the Android mobile operating system.

Source:: Computer World

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Microsoft employees must use Apple iPhones in China

In a step that perhaps symbolizes the steady erosion of bridges between nations, Microsoft is ordering its staff in China to abandon Android phones to exclusively use iPhones.

The ban begins in September, when staff will be required to use iPhones for work — specifically for identity verification when logging into devices. Microsoft wants all its staff to use Microsoft Authenticator and Identity Pass. Microsoft is going to distribute iPhones to employees that currently use Android devices as part of the initiative, a report from investing.com claims.

That’s what I call fragmentation

What makes that decision problematic is that in China there is no Google Play store, which means Android app stores are fragmented, with local Chinese manufacturers offering their own app platforms. Chinese smartphone companies are also building their own operating systems, further fragmenting the mobile landscape there.

This may become a bigger problem in the future as regulators force Apple to support sideloading: “Forced sideloading could open the door to risks like fake apps, malware, and social engineering attacks that have long plagued the Android ecosystem,” Hexnode CEO Apu Pavithran recently warned.

Microsoft’s decision to coalesce around the iPhone echoes and reflects what’s allegedly taking place in China, where a growing number of government agencies and companies are asking staffers to avoid using foreign-owned devices. That’s yet another manifestation of the growing political tension between Washington and Beijing.

Microsoft didn’t get mobile

But beyond the story of political conflict lurks two additional realities. Not only does Microsoft’s decision illustrate the security hazards of a fragmented app store market, it also shows the extent to which the developer has failed to secure a strong foothold in the mobile device market.

Cast your mind back — and it really isn’t so long ago — when the notion that Microsoft would recommend its employees use Apple iPhones would have been unthinkable. Things have changed, perhaps for the better, as the additional security benefits unlocked through multi-platform enterprise deployments is now widely understood.

Political tensions remain

Apple may have cause for concern about Microsoft’s decision, as it sheds light on the delicate dance it is engaged in. Apple has been doing its diplomatic best to maintain cordial relationships in both China and the US.

All parties benefit in the dance. Both the US and China enjoy the economic benefits the relationship delivers, particularly (at least at present) around employment across the iPhone factories in China and wider iOS ecosystems elsewhere. Apple in China creates lots of wealth that lands in the exchequers of both nations, even as the tech itself enhances productivity.

Apple is, of course, not blind to the growing tension between the two nations. It’s rapidly increasing investments in India and manufacturing hubs elsewhere across the APAC region, evidence of that awareness. But even now the vast majority of its products are made in China. Building a replacement manufacturing ecosystem was always going to take vast amounts of money and time, and it wasn’t merely the pandemic that forced Apple’s operations staff to accelerate investment in manufacturing outside of China.

It’s complicated

One thing Apple doesn’t need is for trading conditions to worsen in what remains its biggest market outside the US. The slow move by China’s government to reject iPhone use at work is potentially as significant a problem to the company as the US government’s poorly considered anti-trust litigation against it. Both sets of decisions are likely to hit Apple’s bottom line, even as the gulf between the two nations continues to grow.

The race to AI is unlikely to improve things. The US has already taken steps in the form of sanctions to hamper China’s progress in AI development, though the impact seems limited. At the same time, Apple’s decision to introduce its own AI tools first only in the US, and to confirm that the EU will not gain access to them for some time yet, reflects a similar story of disunity as nations vie for tech prominence.

Please follow me on Mastodon, or join me in the AppleHolic’s bar & grill and Apple Discussions groups on MeWe.

Source:: Computer World

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Europe’s Ariane 6 ready for launch: Here’s how the rocket will reach orbit

Europe is set to regain independent access to space tomorrow, July 9, when the long-awaited Ariane 6 rocket lifts off for the first time. The heavy-lift satellite launcher — commissioned by the European Space Agency (ESA) and made by ArianeGroup — was supposed to replace its predecessor, Ariane 5, right after its retirement a year ago. But a series of delays in developing Ariane 6, problems with the Vega-C small-lift launcher, and the loss of access to Russia’s Soyuz rockets following the full-scale invasion of Ukraine, left Europe with no launch system of its own. As a result, for the…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Desperate for power, AI hosts turn to nuclear industry

As data centers grow to run larger artificial intelligence (AI) models to feed a breakneck adoption rate, the electricity needed to power vast numbers of GPU-filled servers is skyrocketing.

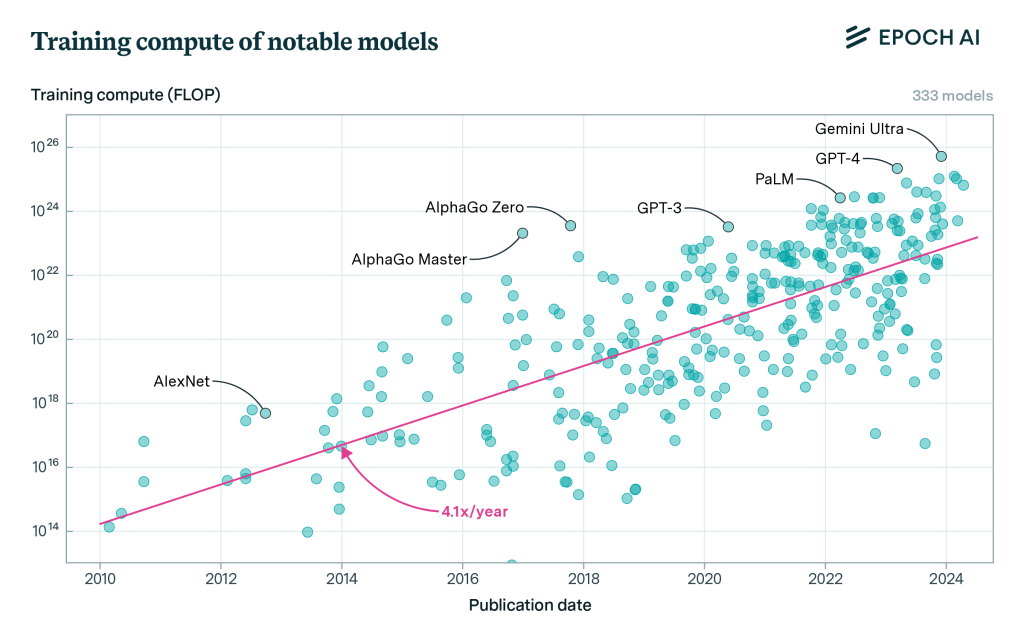

The compute capacity needed to power AI large language models (LLMs) has grown four to five times per year since 2010, and that includes the biggest models released by OpenAI, Meta, and Google DeepMind, according to a study by Epoch AI, a research institute investigating key AI trends.

Epoch AI

AI service providers such as Amazon Web Services, Microsoft, and Google have been on the hunt for power providers to meet the growing electricity demands of their data centers, and that has landed them squarely in front of nuclear power plants. The White House recently announced plans to support the development of new nuclear power plants as part of its initiative to increase carbon-free electricity or green power sources.

AI as energy devourer

The computational power required for sustaining AI’s rise is doubling roughly every 100 days, according to the World Economic Forum (WEF). At that rate, the organization said, it is urgent for the progression of AI to be balanced “with the imperatives of sustainability.”

“The environmental footprint of these advancements often remains overlooked,” the Geneva-based, nongovernmental organization think tank stated. For example, to achieve a tenfold improvement in AI model efficiency, the computational power demand could surge by up to 10,000 times. The energy required to run AI tasks is already accelerating with an annual growth rate between 26% and 36%.

“This means by 2028, AI could be using more power than the entire country of Iceland used in 2021,” the WEF said.

Put simply, “AI is not very green,” said Jack Gold, principal analyst with tech industry research firm J. Gold Associates.

Large language models (LLMs), the algorithmic foundation for AI, train themselves on vast amounts of data scoured from the internet and other sources. It is the process of training AI models (i.e., LLMs) and not the act of chatbots and other AI tools offering users answers based on that data — known as “inference” — that requires the overwhelming majority of compute and electrical power.

And, while LLMs won’t be training themselves 100% of the time, the data centers in which they’re located require that peak power always be available. “If you turn on every light in your house, you don’t want them to dim. That’s the real issue here,” Gold said.

“The bottom line is these things are taking a ton of power. Every time you plug in an Nvidia H100 module or anyone’s GPU for that matter, it’s a kilowatt of power being used. Think about 10,000 of those or 100,000 of those, like Elon Musk wants to deploy,” Gold said.

The hunt for power heats up

As opposed to adding new green energy to meet AI’s power demands, tech companies are seeking power from existing electricity resources. That could raise prices for other customers and hold back emission-cutting goals, according The Wall Street Journal and other sources.

According to sources cited by the WSJ, the owners of about one-third of US nuclear power plants are in talks with tech companies to provide electricity to new data centers needed to meet the demands of an artificial-intelligence boom.

For example, Amazon Web Services is expected to close on a deal with Constellation Energy to directly supply the cloud giant with electricity from nuclear power plants. An Amazon subsidiary also spend $650 million to purchase a nuclear-powered data center from Talen Energy in Pennsylvania, and it plans build 15 new data centers on its campus that will feed off that power, according to Pennsylvania-based The Citizen’s Voice.

One glaring problem with bringing new power online is that nuclear power plants can take a decade or more to build, Gold said.

“The power companies are having a real problem meeting the demands now,” Gold said. “To build new plants, you’ve got to go through all kinds of hoops. That’s why there’s a power plant shortage now in the country. When we get a really hot day in this country, you see brownouts.”

The available energy could go to the highest bidder. Ironically, though, the bill for that power will be borne by AI users, not its creators and providers. “Yeah, [AWS] is paying a billion dollars a year in electrical bills, but their customers are paying them $2 billion a year. That’s how commerce works,” Gold said.

“Interestingly enough, Bill Gates has an investment in a smallish nuclear power company that wants to build next-generation power plants. They want to build new plants, so it’s like a mini-Westinghouse,” Gold said. “He may be onto something, because if we keep building all these AI data centers, we’re going to need that power.”

“What we really need to do is find green AI, and that’s going to be tough,” Gold added.

Amazon said it has firmly set its sights on renewable energy for its future and set a goal to reach net-zero carbon emissions by 2040, ten years ahead of the Paris Agreement. The company, which is the world’s largest purchaser of renewable energy, hopes to match all of the electricity consumed by its operations with 100% renewable energy by 2025. It’s already reached 90%, according to a spokesperson.

“We’re also exploring new innovations and technologies and investing in other sources of clean, carbon-free energy. Our agreement with Talen Energy for carbon-free energy is one project in that effort,” the Amazon spokesperson said in an email response to Computerworld. “We know that new technology like generative AI will require a lot of compute power and energy capacity both for us and our customers — so while we’ll continue to invest in renewable energy, we’ll also explore and invest in other carbon-free energy sources to balance, including nuclear.

“There isn’t a one-size-fits-all solution when it comes to transitioning to carbon-free energy, and we believe that all viable and scalable options should be considered,” the spokesperson said.

AI as infrastructure planner

The US Department of Energy (DOE) is researching potential problems that may result from growing data center energy demands and how they may pose risks to the security and resilience of the electric grid. The agency is also employing AI to analyze and help maintain power grid stability.

The DOE’s recently released AI for Energy Report recognized that “AI itself may lead to significant load growth that adds burden to the grid.” At the same time, a DOE spokesperson said, “AI has the potential to reduce the cost to design, license, deploy, operate, and maintain energy infrastructure by hundreds of billions of dollars.”

AI-powered tools can substantially reduce the time required to consolidate and organize the DOE’s disparate information sources and optimize their data structure for use with AI models.

The DOE’s Argonne Lab has initiated a three-year pilot project with multiple work streams to assess using foundation models and other AI to improve siting, permitting, and environmental review processes, and help improve the consistency of reviews across agencies.

“We’re using AI to help support efficient generation and grid planning, and we’re using AI to help understand permitting bottlenecks for energy infrastructure,” the spokesperson said.

The future of AI is smaller, not bigger

Even as LLMs run in massive and expanding data centers run by the likes of Amazon, IBM, Google, and others are requiring more power, there’s a shift taking place that will likely play a key role in reducing future power needs.

Smaller, more industry- or business-focused algorithmic models can often provide better results tailored to business needs.

Organizations plan to invest 10% to 15% more on AI initiatives over the next year and a half compared to calendar year 2022, according to an IDC survey of more than 2,000 IT and line-of-business decision makers. Sixty-six percent of enterprises worldwide said they would be investing in genAI over the next 18 months, according to IDC research. Among organizations indicating that genAI will see increased IT spending in 2024, internal infrastructure will account for 46% of the total spend. The problem: a key piece of hardware needed to build out that AI infrastructure — the processors — is in short supply.

LLMs with hundreds of billions or even a trillion parameters are devouring compute cycles faster than the chips they require can be manufactured or upscaled; that can strain server capacity and lead to an unrealistically long time to train models for a particular business use.

Nvidia, the leading GPU maker, has been supplying the lion’s share of the processors for the AI industry. Nvidia rivals such as Intel and AMD have announced plans produce new processors to meet AI demands.

“Sooner or later, scaling of GPU chips will fail to keep up with increases in model size,” said Avivah Litan, a vice president distinguished analyst with Gartner Research. “So, continuing to make models bigger and bigger is not a viable option.”

Additionally, the more amorphous data LLMs ingest, the greater the possibility of bad and inaccurate outputs. GenAI tools are basically next-word predictors, meaning flawed information fed into them can yield flawed results. (LLMs have already made some high-profile mistakes and can produce “hallucinations” where the next-word generation engines go off the rails and produce bizarre responses.)

The solution is likely that LLMs will shrink down and use proprietary information from organizations that want to take advantage of AI’s ability to automate tasks and analyze big data sets to produce valuable insights.

David Crane, undersecretary for infrastructure at the US Department of Energy’s Office of Clean Energy, said he’s “very bullish” on emerging designs for so-called small modular reactors, according to Bloomberg.

“In the future, a lot more AI is going to run on edge devices anyways, because they’re all going to be inference based, and so within two to three years that’ll be 80% to 85% of the workloads,” Gold said. “So, that becomes a more manageable problem.”

This article was updated with a response from Amazon.

Source:: Computer World

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

ITER troubles expose need for fusion between startups and governments

ITER, set to be the world’s largest experimental fusion reactor, has been delayed yet again. The €25bn megaproject will only switch on in 2034, and start producing energy in 2039. That’s almost a decade later than originally planned. Thirty-five nations including the UK, US, China, and Russia launched ITER in 2006 to demonstrate the scientific and technological feasibility of fusion power. But startups may end up beating them to it. As private companies race to commercialise fusion energy, it’s increasingly clear that ITER will take on a more supporting role. But that doesn’t mean it’s obsolete. ITER, one of history’s…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Boeing and the perils of outsourcing mission-critical work

Around and around Starliner goes, and when it comes down, nobody knows. What we do know is that thanks to poor development and engineering, Boeing’s stock will come down soon.

I remember a time when Boeing was one of the top American companies. Indeed, it was the very model of a modern technology enterprise. Then things changed. In 1997, after its merger with McDonnell Douglas, the company prioritized financial engineering over actual engineering and MBAs over aeronautic engineers.

Thereafter, one questionable decision after another was made, and corners were cut. The result? A once-proud American manufacturer is now better known for fatal crashes of its 737 Max 8 planes in 2017 and 2018 and this year’s explosive midflight loss of a 737 Max 9 door plug. These are the results of bad engineering and lousy quality assurance.

Now, as I write this, I see that Boeing’s Starliner spaceship remains parked at the International Space Station. When will it come down bearing its astronauts, Butch Wilmore and Suni Williams? I don’t know. They certainly don’t know. None of us know.

Just don’t say they’re stranded. Boeing’s vice president for its Commercial Crew Program, Mark Nappi, insists, “We’re not stuck on the ISS.” Uh, folks, they’re stranded, and the astronauts are stuck.

I doubt very much that they’ll be coming down on Starliner. Just getting to ISS, five of Starliner’s 28 thrusters stopped working due to helium leaks. NASA engineers got four thrusters to work again … and discovered four more leaks. That’s five known leaks to date.

While the astronauts get more time in space than they ever planned, down here on Earth at NASA’s White Sands Test Facility in New Mexico, they’re testing an identical thruster to work out what’s going on and how to fix it.

I wish them luck. Personally, you couldn’t get me back on Starliner for love or money. I worked at NASA’s Goddard Space Flight Center (GSFC) mission control during the Challenger disaster. That was more than bad enough.

Instead, I suggest the astronauts hitch a ride with the SpaceX Dragon Crew-9, which is still docked at ISS.

This episode may be the straw that will break Boeing’s back.

Lessons for tech leaders

What does all this have to do with your business and technology? A lot.

What your company does may not be a matter of life and death, but your customers still expect you to do your best for them, not your stockholders. For too long, businesses have labored under the delusion that shareholder wealth is more important than the creation of stakeholder value.

The result is that companies prioritize their next quarter’s results over the overall health of their business. Specifically, Boeing didn’t just cut the fat from its teams; it also cut and outsourced its muscle. Financial engineering should never trump actual engineering.

For example, quality assurance went out the window. Instead of testing, testing, and then testing again, Boeing neglected this fundamental principle of both software and hardware engineering. Make sure you don’t.

In particular, if something is mission-critical, treat it that way!

Take, for example, the very fuselages of the 737s. In 2005, Boeing cut costs by selling its Witchica-based manufacturing site to Onex, a private equity firm that buys struggling businesses, slashes costs, and resells them. There went years of experience and a quality-first culture.

That plant would re-emerge as Spirit AeroSystems, Boeing’s third-party manufacturing partner. Whether Boeing overseeing its quality assurance would have improved anything is an open question, but there can be no doubt that Spirit’s products were shoddy and second-rate under a cost-saving mandate.

Never, ever outsource mission-critical work. What Boeing used to do best was engineering and manufacturing. I don’t know what your company does best, but neglecting your expertise to cut costs is a fool’s move.

Now Boeing has repurchased Spirit for about $8.3 billion. The company finally has figured out it can’t fix its problems without fixing its fundamental manufacturing problems. Somehow, I think Boeing would have done better if it’d never spun out its major manufacturing side in the first place.

The moral of the story? Never let MBAs driven by the bottom line take over an engineering company building airplanes and spaceships. The same’s true for your company. Yes, make profits for your owners, but never forget that long-term success comes from putting quality work for your customers first.

More by Steven J. Vaughan-Nichols:

Source:: Computer World

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

This tiny autonomous sailboat is charting a new course for marine science

Amar Shar, the co-founder of British AI unicorn Wayve, has backed Oshen, a budding startup building miniature autonomous sailboats. The little robots could transform the way scientists monitor everything from ocean temperatures and waves to biodiversity. The Plymouth, UK-based startup was founded last year by Anahita Laverack and Ciaran Dowds, two young engineering graduates at Imperial College London. The startup is building small solar-powered “seaborn satellites” that sail around the ocean gathering data. The little robots could make marine data acquisition more accessible than ever before. “We want to do for the sea what smallsats did for space: revolutionise access…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Cloudflare offers simpler way to stop AI bots

Content distribution network Cloudflare is making it simpler for customers who have had enough of badly behaved bots to block them from their website.

It’s long been possible to prevent well-behaved bots from crawling your corporate website by adding a “robots.txt” file listing who’s welcome and who isn’t — and content distribution networks such as Cloudflare offer visual interfaces to simplify the creation of such files.

But faced with the arrival of a new generation of badly behaved AI bots, scraping content to feed their large language models, Cloudflare has introduced an even quicker way to block all such bots with one click.

“The popularity of generative AI has made the demand for content used to train models or run inference on skyrocket, and although some AI companies clearly identify their web scraping bots, not all AI companies are being transparent,” Cloudflare staff wrote in a blog post.

According to authors of the post, “Google reportedly paid $60 million a year to license Reddit’s user generated content, Scarlett Johansson alleged OpenAI used her voice for their new personal assistant without her consent, and most recently, Perplexity has been accused of impersonating legitimate visitors in order to scrape content from websites. The value of original content in bulk has never been higher.”

Last year, Cloudflare introduced a way for any of its customers, on any plan, to block specific categories of bots, including certain AI crawlers. These bots, said Cloudflare, observe requests in sites’ robots.txt files, and do not use unlicensed content to train their models, nor gather to feed for retrieval-augmented generation (RAG) applications.

To do this it identifies bots by their “user-agent string” — a kind of calling card presented by browsers, bots and other tools requesting data from a web server.

“Even though these AI bots follow the rules, Cloudflare customers overwhelmingly opt to block them. We hear clearly that customers do not want AI bots visiting their websites, and especially those that do so dishonestly,” the post said.

The top four AI webcrawlers visiting sites protected by Cloudflare were Bytespider, Amazonbot, ClaudeBot and GPTBot, it said. Bytespider, the most frequent visitor, is operated by ByteDance, the Chinese company that owns TikTok. It visited 40.4% of protected websites, and is reportedly used to gather training data for its large language models (LLMs), including those that support its ChatGPT rival Doubao. Amazonbot is reportedly used to index content to help Amazon’s Alexa’s chatbot answer questions, while ClaudeBot gathers data for Anthropic’s AI assistant Claude.

Blocking bad bots

Blocking bots based on their user-agent string will only work if such bots tell the truth about their identity — but there are signs that not all do, or not all the time.

In such cases, other measures will be necessary — and enterprises’ main recourse against unwanted web scraping is normally reactive: pursue legal action, according to Thomas Randall, director of AI market research at Info-Tech Research Group.

“While some software applications exist for web scraping prevention (such as DataDome and Cloudflare), these can only go so far: if an AI bot is rarely scraping a site, the bot may still go undetected,” he said via email.

To justify legal action against the operators of bad bots, enterprises will need to do more than claim that the bot didn’t leave when asked.

The best course of action, Randall said, is for “enterprises to hide intellectual property or other important information behind a membership paywall. Any scraping done behind the paywall is liable for legal action, reinforced with a clear restrictive copyright license on the site. The organization must, therefore, be prepared to legally follow through. Any scraping done on the public site is accepted as part of the organization’s risk tolerance.”

Randall noted that if organizations have the resources to go further, they could consider rate-limiting connections to their site, temporarily automatically blocking suspicious IP addresses, limiting information on why access has been blocked to a message such as “For help, contact support via [email protected]” in order to force a human interaction, and double-checking how much of their websites are available on their mobile site and apps.

“Ultimately, scraping cannot be stopped, but hindered at best,” he said.

Source:: Computer World

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

Why the EU is imposing maximum tariffs of 36.7% on Chinese EVs

The EU today confirmed huge new tariffs for EVs imported from China. From Friday, provisional charges of between 17.4% and 37.6% will be imposed on the vehicles. The lowest level will apply to BYD, an automaker based in Shenzhen. Geely, which owns Volvo, Polestar, and Lotus, faces duties of 19.9%. SAIC, a Chinese state-owned carmaker, will receive the maximum 37.6%. Other companies will be subject to new tariffs of 20.8%, the weighted average. These fees will come on top of a 10% duty that was already in place. As a result, prices of EVs in Europe could increase. Beijing may…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026

- Snap lost a 400 million dollar AI deal, 20 million dollars a month to the Iran war, and 24 per cent of its stock price. The AR glasses had better work. May 6, 2026

- Chrome’s AI features can take up to 4GB of space on your computer May 6, 2026

- ServiceNow continues its AI transformation with an integrated experience May 6, 2026

- Dreame wants to kit you out with a smartphone, a smart ring, and a rocket-powered sports car May 6, 2026

World’s largest fusion reactor hit by more delays and spiralling costs

ITER — which is set to become the world’s biggest fusion reactor, and one of history’s most expensive science experiments — has reached a key milestone on its mission to create a mini Sun on Earth. But despite the apparent progress, the megaproject has also been hit by yet more delays and surging costs. Nineteen giant magnetic coils — each measuring 17 metres tall and weighing 360 tonnes — have finally been delivered to southern France, where ITER is being constructed. The magnets will form a cage around a donut-shaped chamber called a tokamak. Here they will create a magnetic…

This story continues at The Next Web

Source:: The Next Web

Technology Blog

- Google’s AI Overviews killed 58 per cent of publisher clicks. Now it is adding a ‘Further Exploration’ section to bring some back. May 6, 2026